GCSE Revival: A mobile-first revision tool

A mobile-first GCSE revival tool with ten subject tracks.

TL;DR

We built a mobile-first GCSE revision and reintegration tool from scratch for a fifteen-year-old returning to school after extended hospitalization, with ten subject tracks tailored to specific exam boards, a confidence-first design philosophy (week one is reintegration, not content), gamified XP and mastery progression, timed exam-condition exercises, and a PIN-protected parent dashboard tracking session progress and confidence trends.

The brief

What did the client need?

A fifteen-year-old returning to school after extended hospitalization, facing GCSEs in May 2026 with significant gaps in learning and confidence, and roughly eight to ten weeks to close them. The available revision apps assume a baseline of classroom attendance and consistent study habits. None of them account for the emotional weight of reintegration, none of them handle the "I have no idea what I missed" problem, and none of them celebrate what's already there before introducing what's next.

The deeper version of the brief: rebuild belief before content. A revision tool that opens with "you're behind, here's what you missed" closes more learning gaps than it solves. A tool that opens with "look at what you already know" creates the conditions for the rest of the work to land. The same content, reordered, has wildly different emotional outcomes.

The other constraint that mattered: this had to be hers. Not a generic app, not a parental-control retrofit, not something with another child's photo in the marketing screenshots. Something built specifically around her exam boards, her existing knowledge, her medical context, and her stated goal of working in healthcare.

The constraints

What made this hard?

Three constraints. The first was emotional design. Revision apps default to gap-led framing: here's what you don't know, here's the gap, here's the gap closing. For a learner whose confidence has been eroded by months out of school, gap-led framing is corrosive. The whole product had to invert: progress only ever shows what's been unlocked, never what's missing. Mastery levels celebrate. Streaks reward presence. The tool never says "you should know this".

The second was scope. Ten subjects across two exam boards, with curriculum coverage detailed enough to actually replace the hours of classroom instruction missed. Off-the-shelf apps cover broad strokes; this had to handle specific specifications, specific question formats, and specific examiner conventions, because the exam is in May and there's no time for "broadly correct". Manually writing exam-board-specific curriculum content for ten subjects in eight to ten weeks is infeasible. Claude research per topic, structured against the specification, was the only way to ship the depth in the timeframe.

The third was sustainability. A revision tool that takes thirty minutes to load and crashes on a teenager's older Android isn't a revision tool. The whole product had to run on her actual phone, in short sessions, with no app store approval cycle slowing down iteration. Single-file architecture, zero npm dependencies, instant load, inline curriculum content.

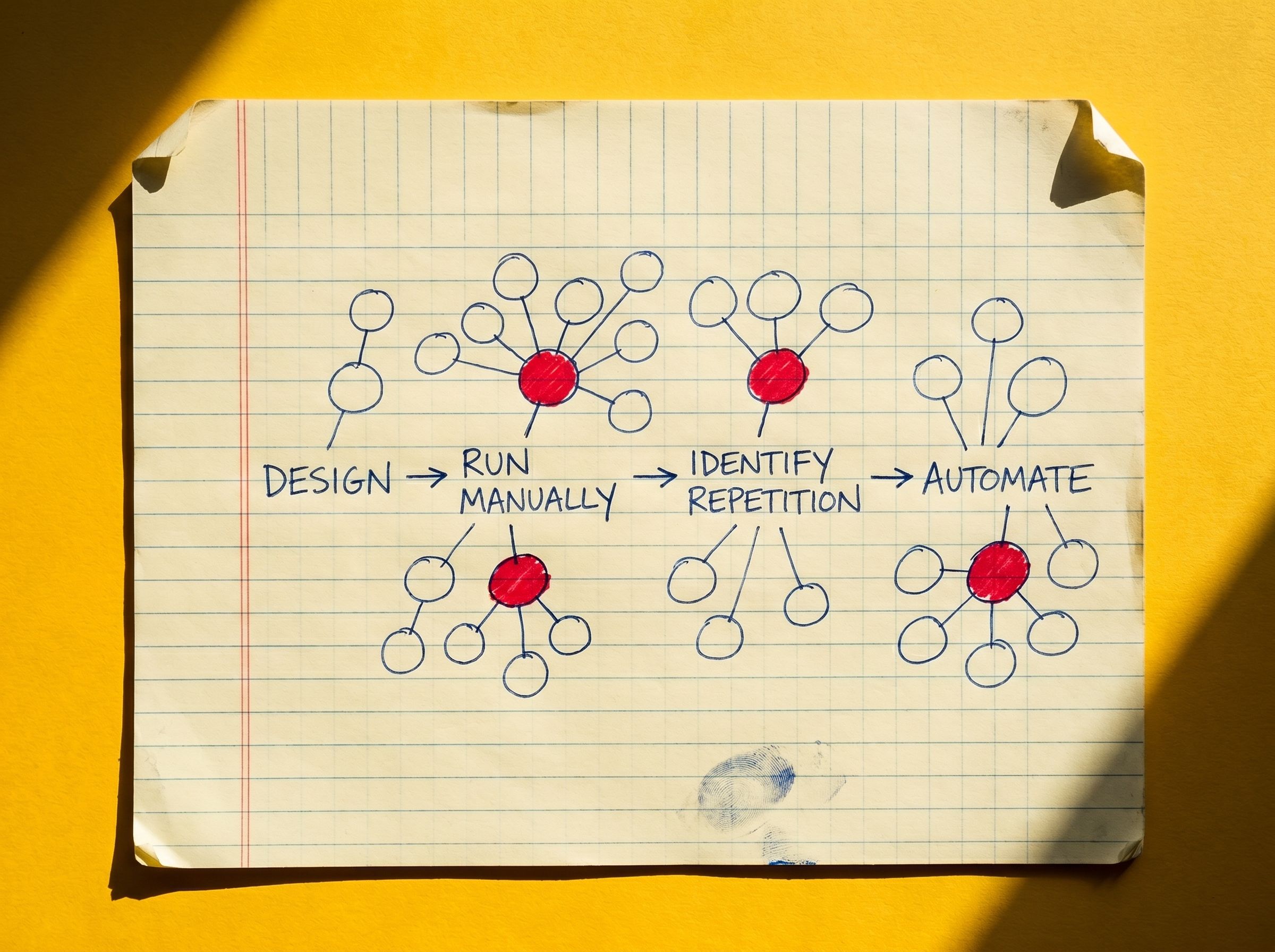

The approach

How did Tincture frame the problem?

A confidence-first revision product, built as a single-page web app she could open like any other website. Week one is entirely reintegration: mapping existing knowledge, celebrating her 46/60 Cambridge National result (achieved while hospitalized), and setting personal targets. Subject content unlocks progressively from week two onwards.

The progression model is gamified with the deliberate constraint that gamification only ever rewards forward motion. XP for completed sessions. Mastery levels (five per track) for content unlocked. Daily streaks for presence. Milestone rewards for visible markers of progress. Nothing in the UI ever shows what's missing. Nothing penalises absence.

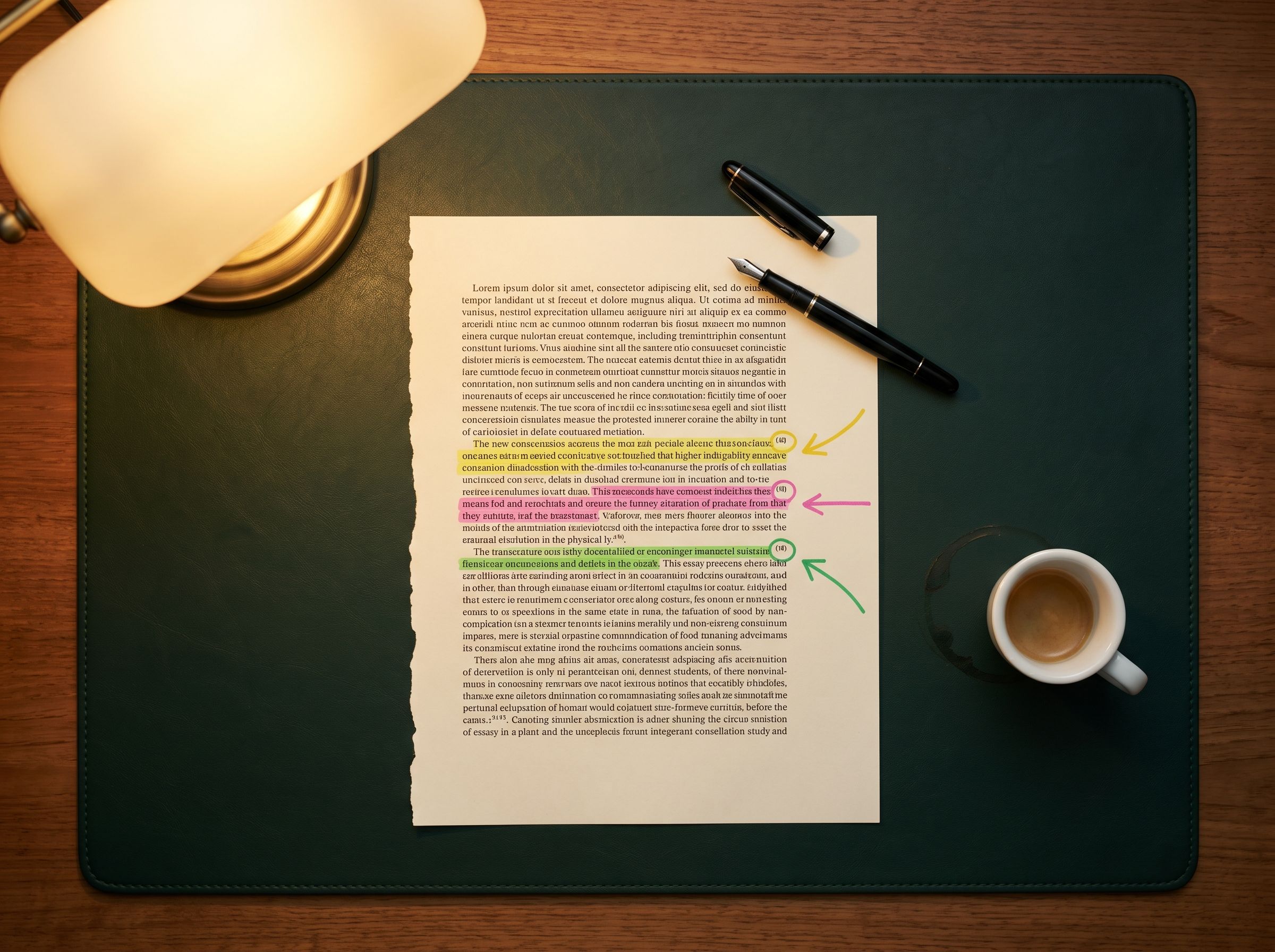

The curriculum content layer is where Claude does the heavy lifting. For each topic across the ten subjects, structured research prompts feed Claude (Claude.ai for deep research, Claude Code for iteration on structuring) with the specific exam-board specification, the question formats commonly used in past papers, and the examiner conventions for marking. Claude returns the topic explanation, worked examples in the exam-board's question format, recall quizzes, application questions, drag-and-sort or fill-in-the-blank interactives, confidence map prompts, embedded BBC Bitesize video links, and curated deeper-resource URLs. The output is reviewed against the specification, edited where the model drifted, and dropped into the inline curriculum as structured data. The research is the time-saver; the review is the quality control. Without the research layer the build doesn't exist; without the review the content drifts off-spec.

Subject tracks were custom-built to her specific exam boards: English Language, English Literature, Maths, Combined Science, Geography, Cambridge National Health and Social Care, Business, Media Studies, plus a dedicated "Back in the Room" reintegration track and an Exam Technique track. Each session follows a consistent template (explanation, worked example, recall quiz, application quiz, interactive element, video, deeper resources) and runs at 20 to 30 minutes per session, so any one of them is finishable in a single sitting on limited energy.

A healthcare motivation thread runs through the whole product. Her stated goal is to work in healthcare, so cross-curricular links connect content back to it (Dickens's social argument in A Christmas Carol links to the founding of the NHS, biology covers human physiology, business covers healthcare organizations). The motivation isn't a stated learning objective; it's woven into the content itself.

The build

What was shipped?

A single 9,800-line HTML file. No build step, no npm, no frameworks. HTML5, CSS3, and vanilla JavaScript, with all curriculum content inline so sessions load instantly with no external CMS dependency. Deployed to Vercel. Supabase Postgres handles progress tracking, gamification state, and parent-dashboard data, with row-level security for the PIN-protected parent view.

Ten subject tracks with full curriculum content for each exam board's specific specifications, generated through the structured Claude research pipeline (scoped against the specification, the question formats, and the examiner conventions), reviewed against the spec, and dropped into the inline curriculum as structured data. Each session follows a consistent template: topic explanation, worked example in the exam-board's question format, recall quiz, application quiz, interactive element (drag-and-sort, fill-in-the-blank, or confidence map), embedded BBC Bitesize video, deeper-resource links.

Multiple exercise types across the curriculum: recall, structured writing, fill-in-the-blank, drag-and-sort, timed exam-condition sprints, confidence maps, and interactive diagrams. Embedded BBC Bitesize videos and curated external resources within each session.

A PIN-protected parent dashboard accessible via `?parent` URL, showing read-only session progress, mastery levels, and confidence trends over time. Designed so a parent can see what's happening without intruding on the learner's space.

A reintegration track ("Back in the Room") that opens the product for the first week. An Exam Technique track for paper-by-paper preparation. Confidence-first progression that unlocks subject content gradually.

The outcome

What were the results?

Live and in daily use. Sessions load on her phone in under a second. Progress, mastery, and confidence data flow into the parent dashboard. The reintegration content has visibly shifted the conversation around revision from "what I'm behind on" to "what I've covered this week".

The single-file architecture earned its keep. There's no deploy cycle, no app store approval, no version-mismatch bug. When something needs to change, the change ships in the time it takes to push to Vercel. That iteration speed is what makes this kind of build economically possible for a one-user product.

The Claude research layer earned its keep on coverage. Ten subjects across two exam boards, with specific specifications and specific examiner conventions, would have been a six-month writing project without it. Structured research prompts per topic, reviewed and dropped into the inline curriculum, compressed that into the actual build window. The depth on her phone is the depth a tutor would prepare; the speed it took to get there is what makes the product feasible.

The deeper outcome is one that doesn't show up in metrics. The tool was built specifically for her and her situation. Generic edtech products optimize for the median user; this one optimizes for the actual user. That asymmetry is the whole point. Most education tools fail not because the content is wrong but because the framing is hostile to the learner who actually needs them most.

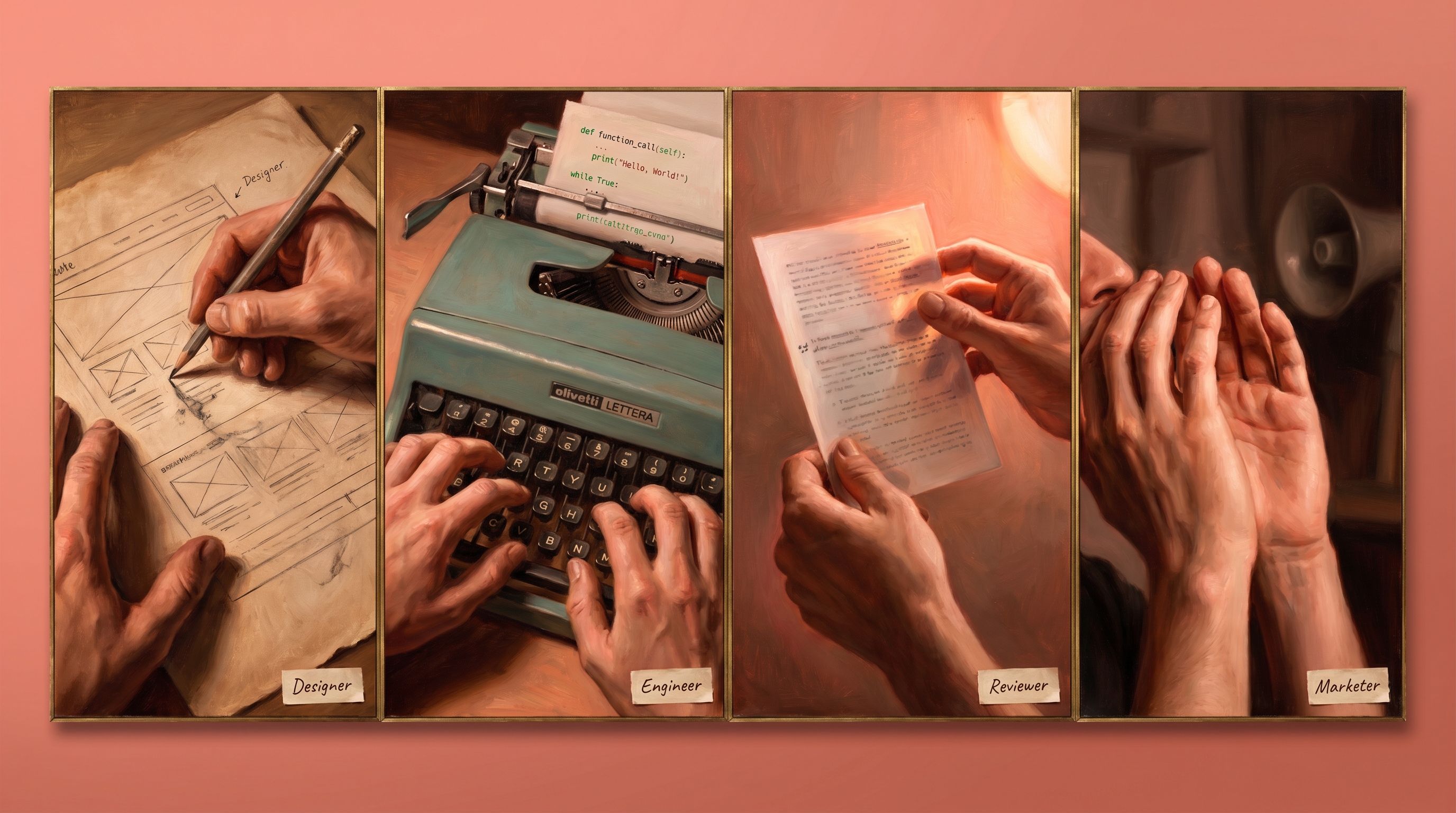

What it took

What tools and methods were used?

Cursor and Claude Code for the build itself. Claude (Claude.ai for deep research, Claude Code for structuring iteration) for the per-topic research pipeline that generated the curriculum content, with each topic prompt scoped against the specific exam-board specification, the question formats commonly used, and the examiner conventions for marking. HTML5, CSS3, vanilla JavaScript. Supabase for Postgres and authentication. Vercel for deployment. No frameworks, no npm dependencies, no build step. The whole architectural decision was that this product had to load instantly on a teenager's phone, and every framework choice that adds a millisecond to load time is a framework choice that loses sessions.

The methodological underpinning is the practice's pattern for any user-of-one product: build with the same rigor as a product for ten thousand, but optimize the architecture for the actual constraint, which here was responsiveness on a single device under specific energy and emotional conditions.

The other move worth naming: AI as the curriculum research and structuring layer, with the boundary drawn deliberately. Claude does the lookup, the structuring, the question generation, and the resource curation. The human does the review against the specification, the editorial judgment on emotional tone, and the final say on what ships. That boundary is the right one for AI in education content: research and structuring as model work, judgment and review as human work. Get the boundary wrong in either direction and the product collapses (manual writing is too slow; unstructured AI drifts off-spec).

The takeaway

What's the transferable principle?

Most education products fail their actual users because they're optimized for the average user. Average means classroom-attending, consistently-studying, emotionally-stable, neurotypical learner with reliable internet and a recent device. Almost no learner who needs a revision tool the most actually fits that profile.

For GCSE Revival, that meant inverting the design defaults. Confidence-first instead of gap-led. Single-file instead of frameworked. Inline content instead of CMS-loaded. Gamification that only rewards forward motion. The same architectural decisions, made in the opposite direction, would have produced a tool that didn't get used.

The second transferable principle, broader than education: when you're building for a specific user with specific constraints, the constraints are the design brief, not the limitations. The fact that this product had to work on her phone in twenty-minute sessions while she's still rebuilding stamina is the reason it got built the way it did. The constraints made the product better, not worse.

The third principle, specific to AI in content-heavy products: structured research per topic, reviewed against the specification, beats both manual writing (too slow) and unstructured AI generation (drifts off-spec). The pipeline is the model: scope the research prompt against the actual constraint (here, exam-board specification), let Claude do the lookup, the structuring, and the question generation, then review the output before it ships. The work that compounds is the prompt scaffolding, not the per-topic content.

Frequently asked questions

More like this

An 8-day game design curriculum; content generated through Claude research per day and Canva API

Huxley's Game Maker: A guided 8-day game design curriculum

Huxley's Game Maker, a guided 8-day game design curriculum for a child shifting from playing games to making them, walking through concept, character design, storytelling, level design, mechanics, sound and music, and a first code sprint.

Featured

FeaturedA weekly Reddit market intelligence engine across trends, competitor SOV, complaints, and pricing

Reddit market intelligence engine

Tincture built a once-weekly automated market intelligence engine for Adamas Studio that scrapes five lab-diamond subreddits (1-1.5k posts, ~20k comments, 30-40k data points per week), extracts structured entities, and delivers actionable intelligence to a Notion dashboard every Monday. The engine covers four dimensions the brand used to make commercial decisions: trend detection (what's emerging or fading), competitor share of voice (who's getting talked about, why, and how), common complaints (where customers are unhappy with the category, surfaced so Adamas can proactively resolve them in product, content, or service), and qualitative pricing intelligence (where the market is settling, what customers consider fair). Built on Python, PRAW, Supabase, ChatGPT API, and GitHub Actions.

Featured

FeaturedAI-native jewelry imagery, from CAD to lifestyle in a single brief

Kora Image Studio

Tincture built Kora Image Studio, a full-stack AI product visualization application that takes a single jewelry design specification and generates CAD line drawings, photorealistic 3D renders, e-commerce product photography, and editorial lifestyle imagery on demand. The studio replaces the traditional pipeline of CAD designer, product photographer, and lifestyle shoot with a single integrated workflow, running on a dual-model Gemini stack with iteration, transformation, and project management built in. Live and in active use, with a single hero specification now seeding entire product catalogues' visual assets.

Building for a user-of-one with specific constraints?

The same architecture pattern (Claude research per topic, single-file delivery, confidence-first design) works for any product where the user is specific and the constraints are the design brief.