Insights / You Don't Rank on Google, You Get Cited by Claude: Generativ…

You Don't Rank on Google, You Get Cited by Claude: Generative Engine Optimization

Alice B

You can't afford the old SEO math: not at this stage. The traditional version works like this: hire a writer, build a backlink strategy, publish consistently, wait six to eight months for Google to decide your domain is worth trusting, and then maybe you start ranking. It's a factory model designed for companies with content teams, patience, and a long runway. Most founders don't have any of those things.

You can't afford the old SEO math. Not at this stage. The traditional version works like this: hire a writer, build a backlink strategy, publish consistently, wait six to eight months for Google to decide your domain is worth trusting, and then maybe you start ranking. It's a factory model. It was designed for companies with content teams, patience, and a long enough runway to treat distribution as infrastructure. Most founders, pre-Series A, don't have any of those things.

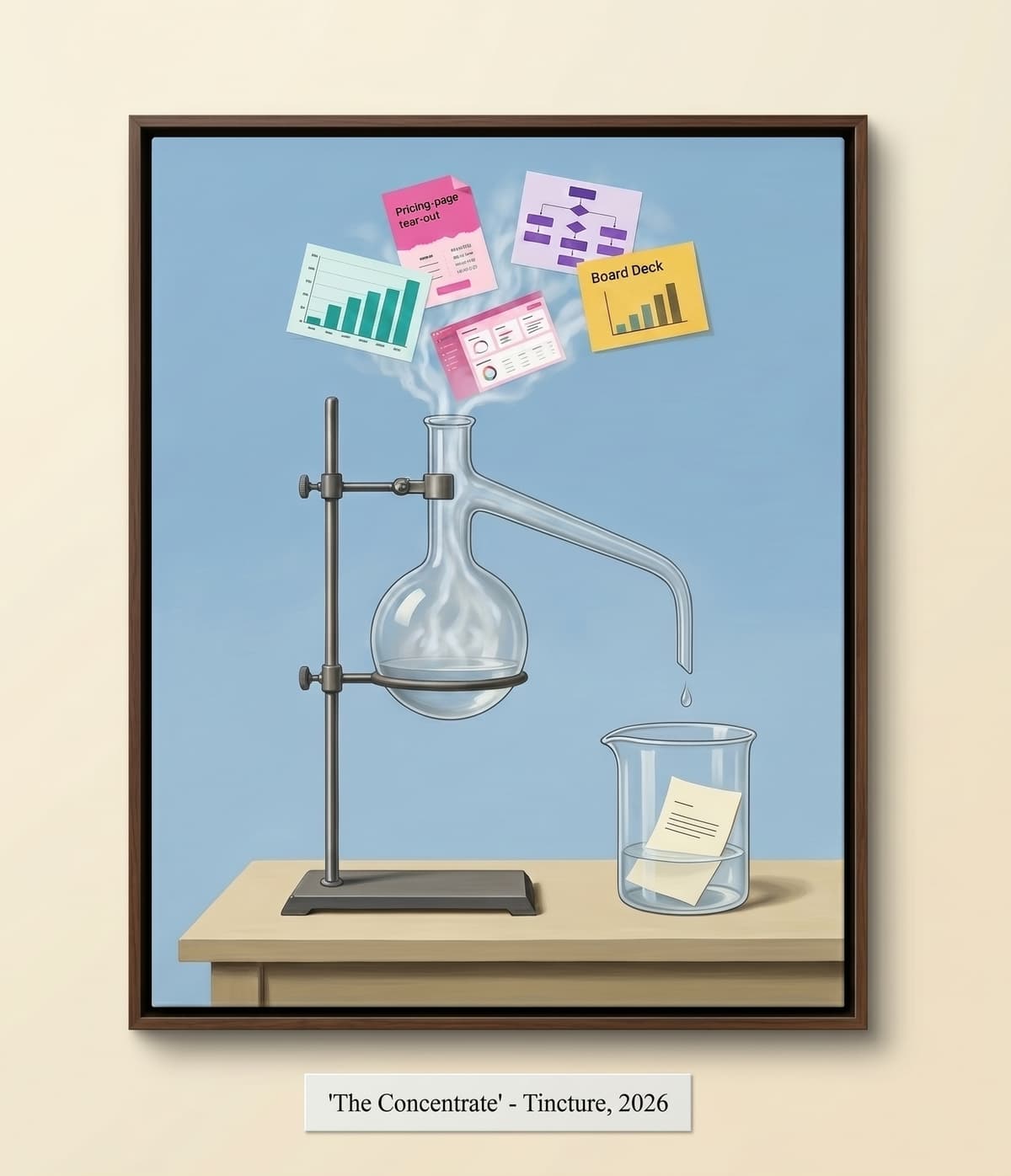

And there's a structural problem nobody was saying out loud until recently: Google is no longer the only index that matters, and the other one runs on a completely different clock. Buyers are asking questions in chat windows now. Claude, ChatGPT, Perplexity, Gemini. They're typing the same questions they used to Google, and the models are answering them. When those models answer, they cite sources. They link. They quote. They send traffic that arrives pre-qualified, because the model already did the screening before your URL appeared.

The new Generative Engine Optimization index refreshes in days, sometimes hours. You can publish a well-structured page on Tuesday and get quoted back to a stranger in a Claude response by Thursday. That's not a theory. That's the cycle time now. Here's how to get cited by AI models before your competitors figure out the game has changed.

Why AI Models Cite Some Pages and Skip Others

Google ranked on backlinks, domain age, and keyword density. Those signals took months to accumulate, which is why the eight-month wait existed. The Generative Engine Optimization index ranks on something different: quotability. A language model generating a response needs specific things to cite:

- A clean, definitive sentence it can lift verbatim without mangling the meaning

- A number with a source attached to it

- A named framework or taxonomy that gives its answer structure

- Clear steps or criteria it can reproduce as a list

What it skips: walls of keyword-optimized prose written for a crawler, vague thought-leadership paragraphs that gesture toward an idea without committing to one, and content that takes 400 words to say what could be said in 40. The technical term for what makes content quotable is specificity — not length, not domain authority, not publishing frequency. One sentence with a precise claim and a clear source is worth more to an LLM than ten paragraphs of qualified hedging.

The New Ranking Report: How to Check Whether You're Being Cited

The old SEO feedback loop was brutal. You'd publish in January, check your rankings in March, and if nothing had moved, you had no idea whether the problem was the content, the backlinks, the keyword choice, or just Google being slow. Monthly review cycles on a six-month lag. The new Generative Engine Optimization feedback loop runs in 48 hours, and your dashboard is free.

How to run your ranking report

- Open Claude, ChatGPT, or Perplexity — use all three if you want coverage

- Type the exact question your ideal customer would ask during discovery. Not a keyword. A question.

- Read the response. See who gets cited. See what gets quoted.

- Note what made the cited content quotable: usually a specific number, a named framework, or a definition the model can reproduce cleanly.

- Ask yourself: is your content in that response? Is your competitor's? Is a Reddit thread from 2019?

When a five-year-old Reddit thread is outranking your published content with an AI model, it's because the Reddit thread had a specific, firsthand answer to a real question, and your content had optimized paragraph structure. A founder who rewrote their documentation to match that structure found Claude citing them instead within a week.

The Practice: How to Optimize for AI Citation in One Afternoon Per Week

This isn't a campaign. It's a weekly Generative Engine Optimization habit with a two-day feedback cycle.

Step 1: Pick one buyer question per week

Pull it from your actual sales calls. What does a prospect ask in the first 20 minutes? 'How is this different from [competitor]?' 'What kind of results should I expect in the first 90 days?' Those are your prompts. Not keyword tools. Sales calls.

Step 2: Write one page that answers it the way a model wants

The structure that gets cited: one clear definition or claim in the first paragraph, a numbered list or named framework in the body, at least one specific number with context, and a closing sentence a founder would screenshot. Length doesn't matter. 400 words with three citable elements beats 2,000 words of padded prose. Write the line first. Build the explanation around it.

Step 3: Publish it somewhere crawlable

Your own site is fine. Substack is fine. LinkedIn articles are crawled. A well-structured GitHub README gets cited regularly. You don't need a domain with ten years of trust. You need a page the model can read and a sentence it wants to use.

Step 4: Wait 48 hours, then run your ranking report

Ask the original question in Claude. If you're cited: good, repeat with the next buyer question on your list. If you're not: read what did get cited, identify the quotable element, rewrite your page with that structure, check again in 48 hours.

Step 5: Repeat weekly or delegate it

Eight weeks of this practice means eight pages answering eight buyer questions, each tuned for quotability. The model starts to have a lot of material to draw from when answering questions in your category. You become legible to it before your competitors do. The compounding effect is real.

Why Technical Founders Have an Advantage Here

The old SEO asked you to run a content factory: consistent publishing volume, keyword research, link-building outreach, a managing editor. It was built for marketers. Technical founders found it alienating because it rewarded the process over the idea, and because the feedback loop was too slow to feel like engineering.

The new Generative Engine Optimization version rewards precision over volume. It rewards defining things exactly, naming frameworks, stating claims clearly, and cutting the hedge. Those are technical skills. The question 'what is the minimum viable description of this concept that a non-expert could act on?' is the same question you ask when writing documentation. You already know how to do this.

The window right now is wide open. Most of your competitors are still pouring budget into the old game, optimising for a search engine that's losing its monopoly on the question. You don't have to beat them at Google. You have to be legible to the model before they are. That's a shorter race.

Where to Start This Week

Pick one question from your last three sales calls. Write 400 words that answer it precisely. Make sure there's one sentence in there you'd put in a pitch deck. Publish it. Ask Claude the question in 48 hours. That's the whole practice.

Frequently asked questions

Why do AI models cite some pages and skip others?

LLMs rank on quotability, not domain authority or backlinks. They want a clean, definitive sentence they can lift verbatim, a number with a source, a named framework, and clear steps they can reproduce. Content with 400 words of keyword-optimized generalities gets skipped. One sentence with a precise claim and a clear source is worth more than ten paragraphs of qualified hedging.

How quickly can content get cited by an AI model after publishing?

The LLM index refreshes in days, sometimes hours — compared to six to eight months for Google to trust a new domain. Publish a well-structured page on Tuesday and it can appear in a Claude or ChatGPT response by Thursday. The feedback loop is 48 hours, not a quarterly review cycle.

How do I check whether my content is being cited by Claude or ChatGPT?

Open Claude, ChatGPT, or Perplexity and type the exact question your ideal customer would ask during a sales call — not a keyword, a real question. Read the response and see who gets cited. Note what made the cited content quotable. This is your free ranking report, and it updates in near-real-time.

Do technical founders have a natural advantage with LLM SEO?

Yes. The old SEO rewarded process: consistent volume, link-building, keyword density — skills that felt like factory work to most technical founders. LLM SEO rewards precision: defining things exactly, naming frameworks, stating claims clearly, cutting the hedge. Those are documentation skills. Technical founders already know how to do this.

What type of content gets cited by AI models?

Content with specific, named things in it: a clear definition in the first paragraph, a numbered list or named framework in the body, at least one specific number with context, and a closing sentence clear enough to screenshot. Length doesn't matter. 400 words with three citable elements beats 2,000 words of padded prose.