Reddit market intelligence engine

A weekly Reddit market intelligence engine across trends, competitor SOV, complaints, and pricing

TL;DR

Tincture built a once-weekly automated market intelligence engine for Adamas Studio that scrapes five lab-diamond subreddits (1-1.5k posts, ~20k comments, 30-40k data points per week), extracts structured entities, and delivers actionable intelligence to a Notion dashboard every Monday. The engine covers four dimensions the brand used to make commercial decisions: trend detection (what's emerging or fading), competitor share of voice (who's getting talked about, why, and how), common complaints (where customers are unhappy with the category, surfaced so Adamas can proactively resolve them in product, content, or service), and qualitative pricing intelligence (where the market is settling, what customers consider fair). Built on Python, PRAW, Supabase, ChatGPT API, and GitHub Actions.

The brief

What did the client need?

Reddit is where the lab-grown diamond market argues with itself in public. Customers compare vendors, debate cuts, complain about service, share prices, and reveal exactly which alternatives they're considering, all in language that doesn't show up anywhere else. For a brand like Adamas Studio operating in a category where the buyers are technically informed and forum-active, the Reddit conversation isn't anecdotal; it's commercial intelligence sitting in plain sight.

The brief was to convert that signal into structured weekly intelligence across four dimensions. Trend detection: what's emerging in the category, what's fading. Competitor research: vendor share of voice, who's getting talked about and how. Common complaints: where customers are unhappy with the category as a whole, and where Adamas can proactively resolve those complaints in product, content, or customer service. Qualitative pricing intelligence: where the market is settling, what customers consider fair, where competitors are pricing.

The deeper version of the brief: build an intelligence engine that pays for itself in commercial decisions. Pricing decisions, content decisions, product decisions, customer service decisions, competitor monitoring. Every meeting where someone asks "what's happening with vendor X right now?" or "what are customers complaining about this week?" should already have an answer waiting.

The constraints

What made this hard?

Three constraints. The first was data volume. Five subreddits, monitored weekly, generates roughly 1,000 to 1,500 posts and 20,000 comments per week, totalling 30,000 to 40,000 individual data points. That's a deduplication, rate-limiting, and storage problem before it's an analysis problem. The pipeline had to be idempotent so a re-run never duplicated entries, and respectful enough of the Reddit API's rate limits that it never got throttled.

The second was entity extraction without LLM cost. Running 30,000 data points per week through a language model for entity extraction would burn budget that doesn't justify itself for a single brand. The first version of the pipeline runs rule-based extraction on the structured entities (vendors, shapes, settings, metals, prices, certifications) because those are predictable enough to pattern-match. The LLM layer was reserved for harder consolidation jobs where rules failed, including complaint clustering and sentiment.

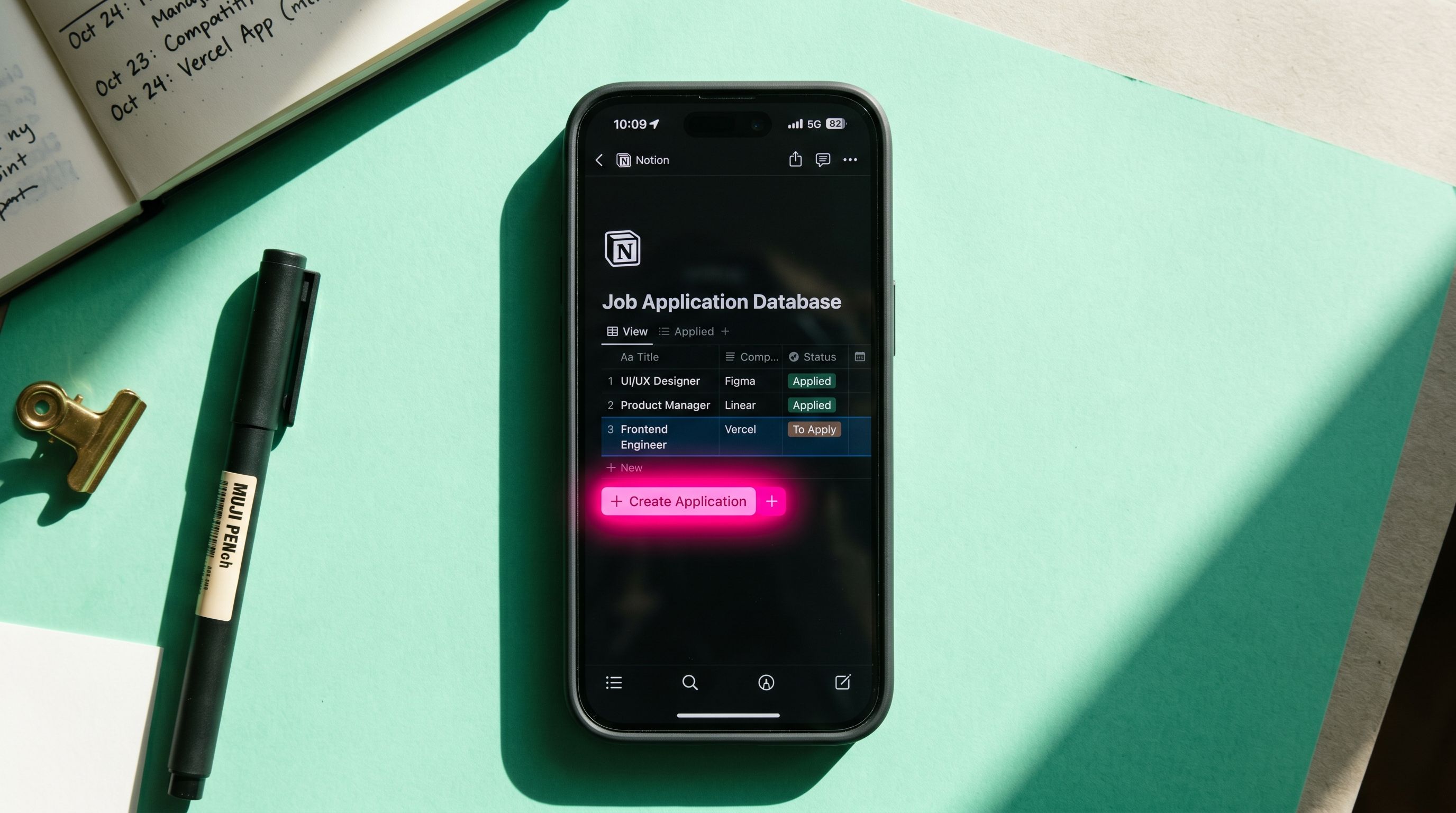

The third was actionability. A pipeline that produces a 300-row CSV every week is not intelligence; it's homework. The output had to be a Notion dashboard with the four-dimension surface up top (trends, competitors, complaints, pricing), each with KPIs, charts, and bulleted takeaways, and the raw data exportable as CSV underneath. The point is that the founders open the dashboard on Monday morning and read a one-page brief, not that they spend an hour analysing a spreadsheet.

The approach

How did Tincture frame the problem?

A weekly cron job, idempotent pipeline, four-dimension Notion output. The cron is GitHub Actions, scheduled at 08:30 UK every Monday. The pipeline pulls from PRAW (the Python Reddit API wrapper), deduplicates against a Supabase Postgres store of prior weeks' data, runs rule-based extraction on the structured entities, runs LLM-assisted clustering on the harder dimensions (complaints, sentiment), and pushes a structured report to a Notion dashboard via the Notion API.

The architectural rule was that the pipeline should never require human intervention to run, but always produce output a human could act on. Idempotency for the first half (run forever, never break). Concise four-dimension summary for the second half (read once, decide).

The complaints dimension is the part the brand uses most. Rather than just monitoring sentiment, the pipeline clusters complaints into recurring themes (certification turnaround, vendor service quality, pricing transparency, shipping issues) and surfaces them as a weekly list. Adamas reads that list and decides which complaints to address proactively — through clearer product copy, faster service, content that pre-empts the issue, or operational changes. The intelligence isn't passive monitoring; it's an early-warning system for the gaps competitors are creating.

The trend detection layer compares against rolling four-week and twelve-week averages and flags movements that exceed a threshold. A vendor whose share of voice doubled this week is news. A complaint cluster that's growing week-on-week is news. Stable signals are not. The dashboard surfaces only the news.

The build

What was shipped?

A Python pipeline with PRAW for Reddit ingestion, Supabase Postgres for state, ChatGPT API for the LLM-assisted clustering layer, GitHub Actions for scheduling, and the Notion API for output delivery. Idempotent end-to-end with deduplication, rate limiting, and retry logic.

Five subreddits monitored weekly, covering the full lab-grown diamond customer conversation. Roughly 1,000 to 1,500 posts and 20,000 comments per pull, totalling 30,000 to 40,000 individual data points per week.

Rule-based entity extraction across the categories Adamas needed: vendors, shapes, settings, metals, prices, sizes, certifications. LLM-assisted clustering for the unstructured dimensions: complaint themes, sentiment, emerging product preferences. The structured outputs feed the four-dimension dashboard layer.

A weekly Notion dashboard with the four-dimension surface up top: trends, competitor share of voice, common complaints (clustered with proactive-resolution suggestions), and qualitative pricing intelligence. KPI cards, charts, bulleted takeaways, and CSV exports underneath for deeper analysis. Trend detection comparing this week's numbers against rolling four-week and twelve-week averages, flagging only the movements that matter.

GitHub Actions cron scheduled at 08:30 UK every Monday. Errors logged to a Notion tasks database for manual review when the pipeline misbehaves.

The outcome

What were the results?

Replaced manual Reddit monitoring entirely. Replaced the prior paid alert tooling the team was using before. The intelligence layer that used to require an hour every week of manual scrolling now lands in Notion every Monday morning, structured across the four dimensions that drive commercial decisions.

Trend detection earns its keep on emerging signals: shifts in shape preference, new vendor entrants, category-level movements. Competitor share of voice gives the brand visibility into who's getting talked about and how, week to week. Qualitative pricing intelligence (price medians, ranges, what customers consider fair) feeds directly into pricing decisions and competitive positioning.

The complaints dimension is the most operationally valuable. Customers complaining across the category about, say, certification turnaround times or vendor service responsiveness become a list Adamas reads on Monday and acts on by Wednesday: clearer product copy, faster service, content that pre-empts the complaint, operational changes that turn a category-wide pain point into a brand-level advantage. Most competitors are not doing this. The intelligence is not just monitoring; it's a proactive customer service input.

The compounding outcome is the dataset itself. Every week the pipeline runs, the historical dataset grows, and the trend detection gets sharper. By month three, the pipeline could distinguish between noise and movement on individual entities and complaint clusters. By month six, it could spot emerging vendors and emerging complaints before they hit the broader DTC trade press.

What it took

What tools and methods were used?

Python as the pipeline language. PRAW (Reddit API wrapper) for ingestion. Supabase Postgres for state and deduplication. ChatGPT API for the LLM-assisted clustering layer (used for complaint themes, sentiment, novel terms). GitHub Actions for scheduling. Notion API for dashboard delivery. SendGrid planned for email digest delivery as the second surface.

The methodological underpinning is the practice's pattern for data pipelines: idempotent at the input, structured in the middle, surfaced concisely at the output. Most market intelligence pipelines fail not because the data is wrong but because nobody reads the output. The dashboard layer is doing as much work as the extraction layer; if the founder doesn't open it on Monday, the pipeline didn't run.

The other move worth naming: rule-based first, LLM second. Running 30-40k data points per week through a language model is expensive and unnecessary for the structured entities. Most entity extraction in a structured category (vendors, products, prices) can be pattern-matched. Reserve the LLM for the hard cases (complaint clustering, sentiment, novel terms). The cost stays in proportion to the value.

The takeaway

What's the transferable principle?

Most early-stage brands underuse Reddit as a market intelligence source, not because the data isn't there but because nobody's structured the pipeline to extract it across the dimensions that drive decisions. Trends are interesting. Competitor share of voice is interesting. Complaints turned into proactive operational changes are commercially significant. Pricing intelligence is foundational. The work that lands captures all four in one weekly surface.

For Adamas, that meant a Notion dashboard updated every Monday morning with the four-dimension intelligence, with the complaints layer specifically wired to drive proactive product, content, and service decisions. For a different brand, the entity types change but the architecture doesn't. Any consumer category with a forum-active customer base benefits from the same pattern.

The other transferable principle, broader than market intelligence: build for the read, not the write. The hardest part of a pipeline isn't ingesting the data; it's making the output worth reading. If the founder opens the dashboard on Monday and reads a one-page four-dimension brief instead of a 300-row CSV, the pipeline is doing its job. If the founder closes the tab in thirty seconds, the pipeline is generating data nobody acts on.

Frequently asked questions

Want a market intelligence engine for your category?

We build Reddit market intelligence engines for early-stage brands operating in forum-active consumer categories.