Job Board Scraper & Application Engine

From 200+ company career pages to a tailored application package in under two minutes

TL;DR

Tincture built an end-to-end job application automation system to address a problem the senior talent market has been struggling with in 2026: most senior candidates can't tailor decades of experience for every role they want without giving up half their week, so they either send generic applications (which don't land) or stop applying altogether. The system pairs a daily Node.js + Playwright scraper monitoring 200+ hand-picked company career pages with a Claude-powered application engine that turns any listing into a scored suitability assessment, a tailored CV, and a role-specific cover letter in under 45 seconds, triggered by a single Notion button click. Designed for the senior candidates existing job-hunting tools have left behind.

The brief

What did the client need?

The senior end of the talent market is in a structurally difficult moment. The roles that fit are competitive, hiring teams are looking at hundreds of applications per role, and the gap between an average application and a strong one is wider than it's been in years. The candidates who get traction are the ones whose CVs and cover letters read as if they were written specifically for the role. Generic doesn't land anymore.

The catch is that genuine tailoring takes time. Properly assessing fit, repositioning twenty years of experience for the specific role, writing a targeted cover letter that actually says something about why this candidate matches this opportunity, takes 45 to 90 minutes per application when done well. At any meaningful volume, that's not sustainable. So senior candidates default to one of two failure modes: send generic applications (which don't get the response rate they should) or stop applying for roles they'd actually be great at. Both options leak strong candidates out of strong searches.

Compounding the time problem is the discovery problem. Most interesting roles at VC-backed and remote-first tech companies never make it to LinkedIn or Indeed. They sit on individual company career pages across hundreds of different ATS platforms (Greenhouse, Ashby, Lever, Workable, Notion-hosted boards, custom career pages) with no single aggregator. Discovery is fragmented; monitoring is manual; the roles that fit are buried under volume.

The brief was to solve both problems end to end, in a way that respected the actual constraint senior candidates are working under: time. Surface the roles automatically, then prepare the applications automatically, with a single button click between "I want to apply for this" and "the documents are ready to review".

The constraints

What made this hard?

Three constraints. The first was ATS heterogeneity. Each of the major applicant tracking systems renders job listings differently. Greenhouse uses one structure, Ashby another, Lever another, Workable another, and the Notion-hosted boards are inconsistent enough that they need their own parser. The scraper had to handle all of them, with parsing logic per format, plus a fallback for custom career pages that don't use any standard ATS at all.

The second was assessment honesty. The temptation in any AI-driven application engine is to score every role highly because the model wants to be helpful. That defeats the purpose. The suitability scoring had to be calibrated to refuse generosity: a near-perfect match scores 9-10, a decent match scores 5-6, a role requiring qualifications a candidate doesn't hold scores at 1-2 without pretending otherwise. Honest scoring saves more time than optimistic scoring, by surfacing the roles to skip rather than the roles to apply for.

The third was voice consistency. Twenty years of experience can be repositioned for any of thirteen different skill categories (operational leadership, commercial strategy, product, RevOps, creator economy, AI and automation, etc.), but the cover letter has to read like the same person wrote it every time. The pipeline had to draw from a comprehensive career knowledge document and produce CVs and letters that read differently for every role while still sounding consistently like one applicant.

The approach

How did Tincture frame the problem?

Two pipelines, one Notion database. The scraper runs daily on a local 8am cron, navigates the 200+ career pages via Playwright, parses listings per ATS format, deduplicates against the Notion database, and pushes new structured rows. The application engine runs on demand, triggered by a Notion button.

The scraper architecture had to handle the ATS heterogeneity at the parser level. One Playwright script, multiple format-specific parsers, dispatched based on the URL pattern. Pagination, rendering approach, and field structure vary per platform; each format needed its own logic. Errors and timeouts log to a Notion tasks database for manual review rather than failing silently.

The application engine architecture is a chain. Notion button click fires a webhook through Notion automation, hits a Cloudflare Worker for authentication, forwards to a GitHub Actions workflow via repository_dispatch, which runs the Python pipeline: suitability assessment, CV tailoring advice, cover letter generation. The outputs ship as formatted .docx files to Cloudflare R2, then write back to the Notion record with the score, the assessment, and the file links. End to end in roughly 45 seconds.

The build

What was shipped?

A Node.js + Playwright scraper monitoring 200+ hand-picked companies daily across SaaS, tooling, AI, and automation. Companies hand-picked rather than fetched from a directory, because the bar for "would actually be a great place to work" is qualitative. Format-specific parsers for Greenhouse, Ashby, Lever, Workable, Notion-hosted, and custom career pages. Automated deduplication via the Notion database. Six specific role types tracked. Errors logged to a Notion tasks database.

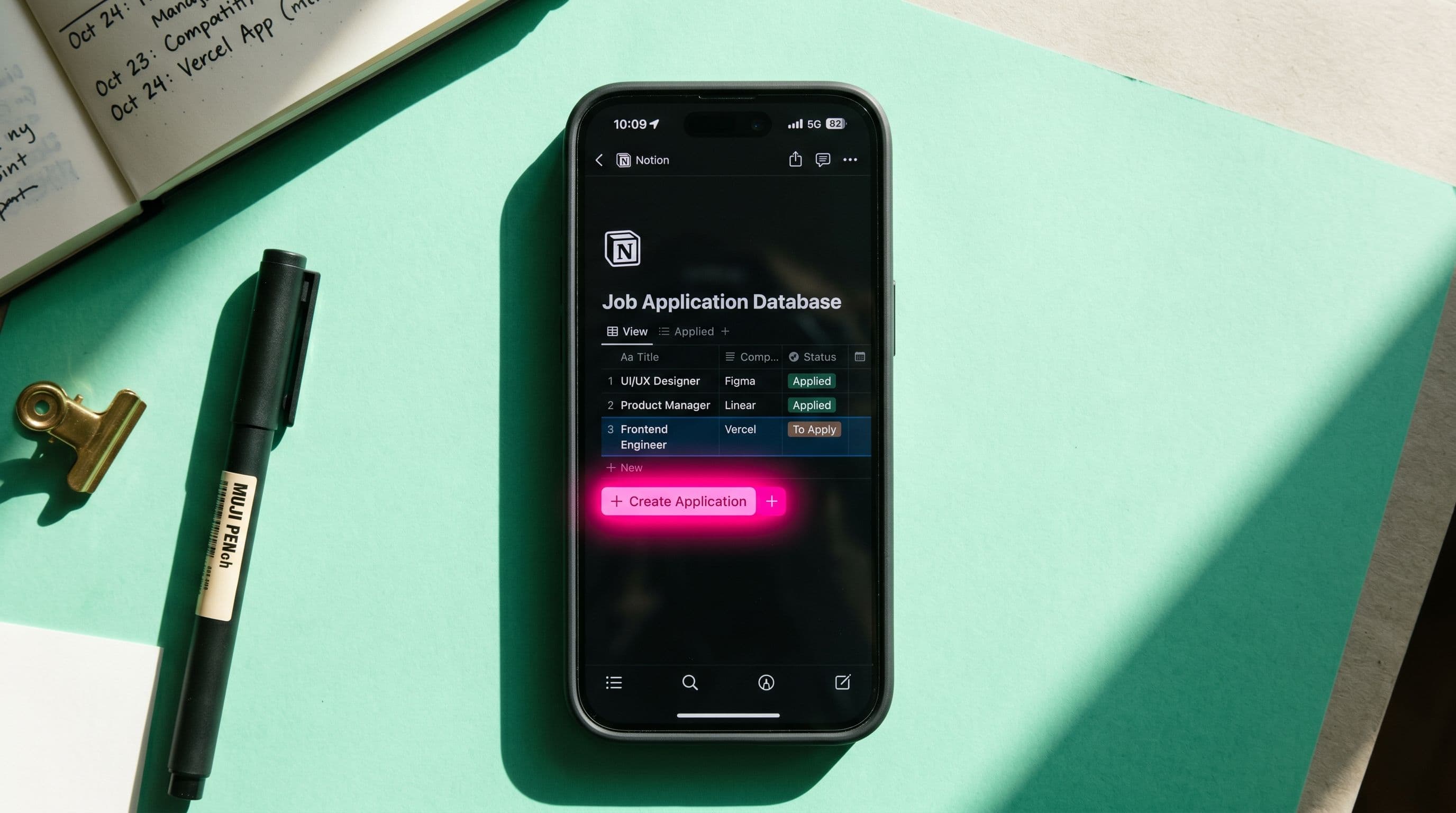

A Notion database with structured fields per listing (company, role, ATS source, URL, raw description, status), filterable and searchable, the single surface for "what's open right now".

A single Notion button per record that triggers the application engine. The engine runs a Python pipeline calling the Claude API for three jobs in sequence: suitability assessment (score, matches, positioning angles, recommendations), CV tailoring advice (section-by-section selections from a 13-skill-category career knowledge document), and cover letter suggestions (voice-matched, role-specific, narrative-hooked).

Document generation in python-docx for formatted CVs and cover letters, uploaded to Cloudflare R2 and attached back to the Notion record. The full written assessment lands in the same record. Everything in one place, ready for a final review pass.

A Cloudflare Worker authenticating the Notion webhook and forwarding to GitHub Actions via repository_dispatch. The whole architecture is event-driven; nothing polls.

The outcome

What were the results?

The system runs as designed. The scraper covers 200+ companies daily, surfacing relevant roles before they hit mainstream job boards. The application engine turns any listing into a scored, tailored, advisory narrative package in under two minutes, replacing what would otherwise be 45 to 90 minutes of manual work per role.

The compounding outcome is the calibration. The suitability scoring is honest enough to be trusted. A 2/10 means don't waste the time; a 9/10 means apply with confidence; a 5-6 means there's an angle if you want it. The system saves more time by surfacing poor-fit roles to skip than by tailoring strong-fit roles to apply for.

The strategic outcome is what the build represents for senior talent more broadly. The market has been telling senior candidates for two years that the volume of competition is up and the response rate to generic applications is down. The standard advice ("apply to more roles", "tailor every application") is mathematically incompatible at the time cost of doing both. The system doesn't ignore the math; it changes it. Tailored applications at scraper-driven volume, with the time cost of tailoring collapsed by a factor of 60.

What it took

What tools and methods were used?

Node.js and Playwright for the scraper. Python for the application engine pipeline. Claude API for the language work (assessment - Haiku, CV tailoring, cover letter generation - Sonnet). GitHub Actions for scheduling and triggering. Cloudflare Workers for webhook authentication. Cloudflare R2 for artefact storage. Notion API as the database and trigger surface. python-docx for document generation. Claude Code for the build itself.

The methodological underpinning is the practice's standard pattern for AI automations: structured inputs, scoped jobs, written outputs. The Claude API isn't asked to do everything in one shot; it's asked to do three discrete jobs (assessment, CV, cover letter) in sequence, each with its own scope and output format. Chaining beats stuffing.

The other move worth naming: honest scoring. The suitability assessment was calibrated to refuse generosity, because optimistic scoring defeats the purpose. The system explicitly recommends skipping roles that don't fit. That single calibration decision is what turns the engine from "applies to everything" to "applies to the right things".

The takeaway

What's the transferable principle?

The senior talent market is in a moment where the standard advice is breaking down. Apply to more roles, tailor every application, network into the right hiring teams; each piece of advice is correct in isolation and incompatible in combination at the time budget most senior candidates actually have. The work that lands rebuilds the math: collapses the time cost of tailoring without collapsing the quality, so the volume and the personalization can both scale.

For this build, that meant a daily scraper across 200+ companies and an application engine that turns any listing into a tailored package in 45 seconds. The same architecture pattern (scrape, score, tailor, deliver) applies to any high-volume knowledge work where the time cost of doing it well is the actual bottleneck.

The other transferable principle, broader than job applications: pipelines that take you from data to decision, not from data to more data. The scraper doesn't just collect listings; it scores them. The application engine doesn't just draft documents; it tells you whether to apply at all. Every step of the pipeline either resolves a question or feeds the next step that does. That's the difference between automation that compounds and automation that piles up.

Frequently asked questions

More like this

12 agents, ~10 issues/day, ~2 hours saved daily, cost = Claude subscription + ~$40/month VM

A 12 agent ↔ Linear ↔ Notion setup

The Operating layer was wired to Linear and Notion, paired via MCP, so each specialist agent could ship from Linear and mirror state into Notion against its per-persona write contract, with identity that survives across sessions. Stand-up took about 2.5 working days of focused build, over a week of elapsed time. Steady state: 12 agents working roughly 10 issues or tasks a day, about 2 hours of time saved daily plus the context-rebuilding tax that doesn't show up on a clock. The whole thing runs on a Hetzner VM in Helsinki, so agents pick up Linear comments and project emails whether the laptop is open or not. Cost is the Claude subscription plus about $40 a month for the VM.

A multi-database operating system replacing six tools, running an entire UK/US jewelry business.

A multi-database Notion operating system for a UK/US jewelry business

We designed and built the operating system Adamas Studio runs on, an interconnected Notion workspace covering inventory, diamonds, CRM, finance, purchase orders, customer portal, and cross-timezone coordination, with automation layered through Relay.app, custom OCR pipelines, custom automations, and Supabase edge functions. The build replaced what would normally be a stack of expensive enterprise tools with a single workspace tuned to the specific shape of a two-founder, UK/US, made-to-order jewelry business.

Featured

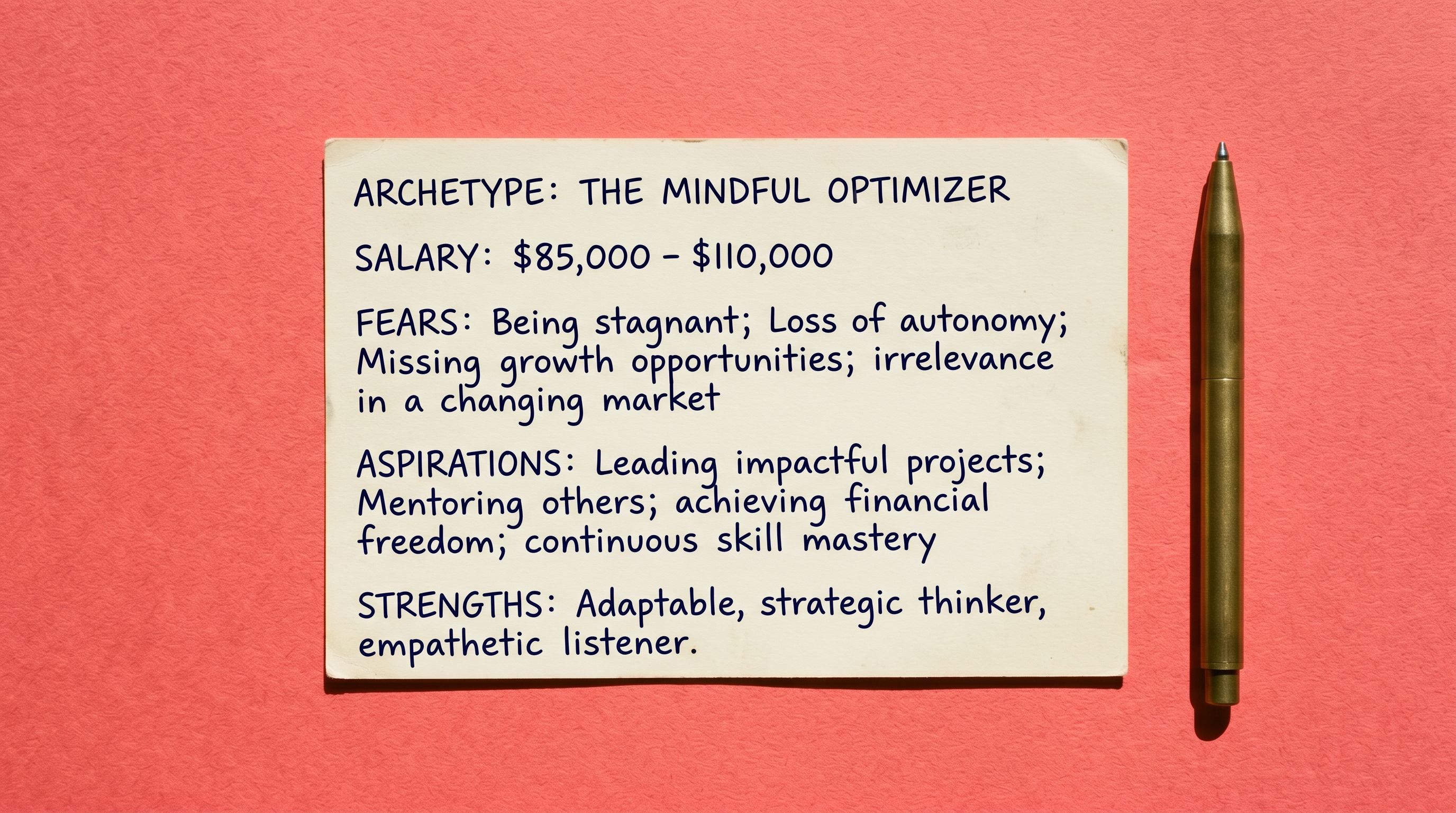

FeaturedA four-step web tool that turns a category, decision context, and emotional drivers into an archetype in under 3 minutes

Buyer Persona Generator

The Buyer Persona Generator, a four-step web tool that takes a founder through structured prompts on category, decision context, and emotional drivers, and returns a complete buyer archetype in under three minutes. Output: a specific person with name, salary, daily reality, fears, aspirations, and a closing sentence ready to drop into copy. Anchored in buyer psychology rather than demographic segmentation, designed for founders whose existing ICP work stops at "B2B SaaS, 50-500 employees" and never gets to a person.

Want a knowledge-work automation pipeline like this?

The same architecture (Notion as surface, GitHub Actions as engine, Cloudflare for auth and storage, Claude for language work) applies to most "trigger a heavy pipeline from a database row" use cases.